At the MCP Dev Summit NA in New York on April 2–3, the Agentic AI Foundation brought together a roundtable with the MCP Project maintainers: Clare Liguori of AWS, David Soria Parra of Anthropic, Caitie McCaffrey of Microsoft, and Nick Cooper of OpenAI, with Stephen O’Grady of RedMonk moderating. The conversation covered the state of MCP at a moment of exceptional growth, from enterprise adoption and governance to security, implementation quality, and the claim that CLI-based agents have made the protocol less relevant. Here are some of the main points from the discussion.

At the MCP Dev Summit NA in New York on April 2–3, the Agentic AI Foundation brought together a roundtable with the MCP Project maintainers: Clare Liguori of AWS, David Soria Parra of Anthropic, Caitie McCaffrey of Microsoft, and Nick Cooper of OpenAI, with Stephen O’Grady of RedMonk moderating. The conversation covered the state of MCP at a moment of exceptional growth, from enterprise adoption and governance to security, implementation quality, and the claim that CLI-based agents have made the protocol less relevant. Here are some of the main points from the discussion.

MCP is still growing quickly

MCP is still growing quickly

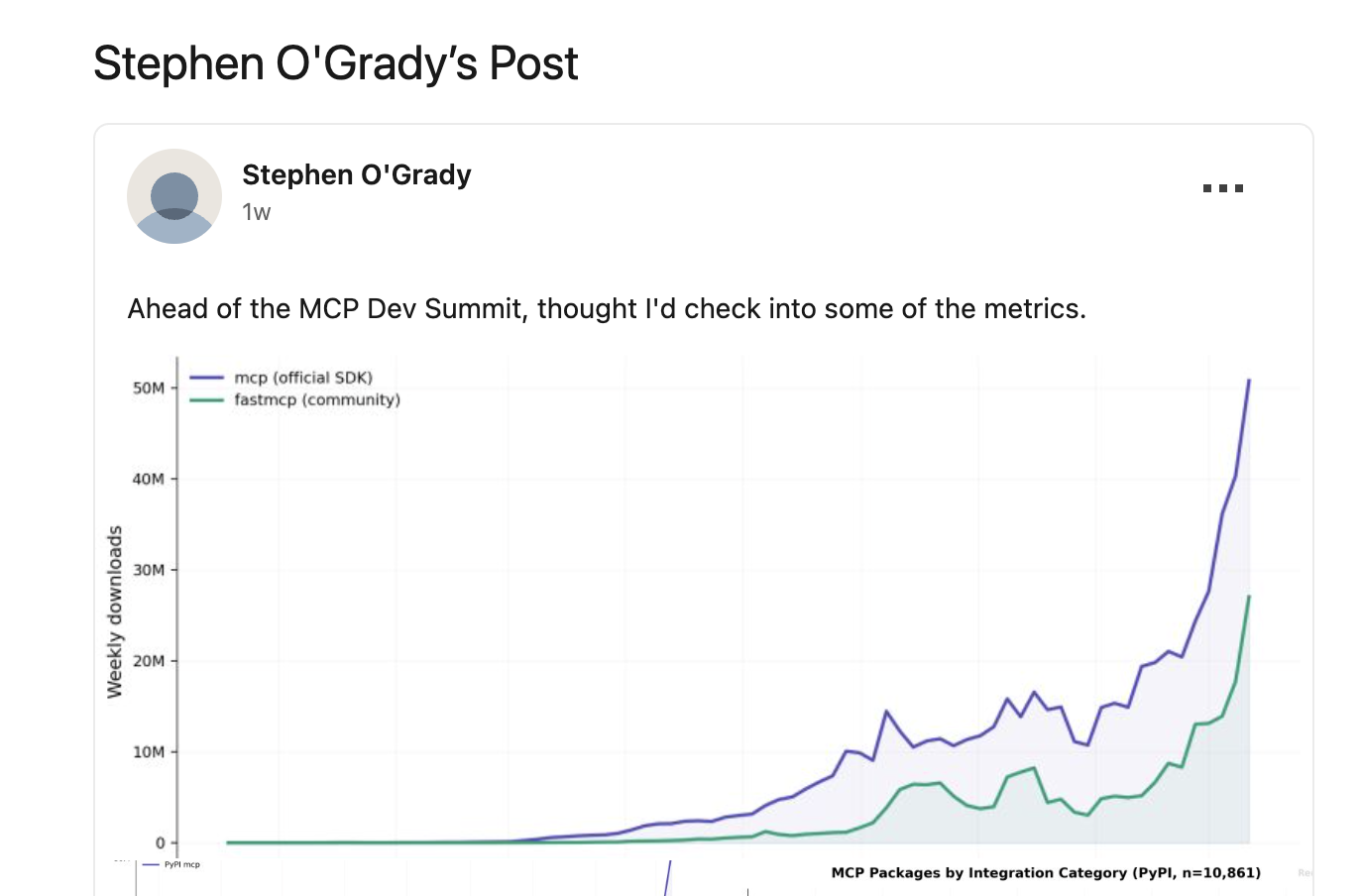

O’Grady opened with the growth numbers. By RedMonk’s estimate, Docker took about 13 months to become a household name; MCP did it in 13 weeks. He added that recent package-registry charts for MCP SDK downloads still look like a “hockey stick up and to the right.”

That puts the rest of the discussion in context. The panel was not discussing how to generate interest in MCP. It was discussing what follows a rapid breakout: how the protocol is governed, how it fits into enterprise architecture, and how implementation quality improves as adoption spreads.

The enterprise case centers on control

“MCP has been super valuable for a lot of customers because it provides that control point, that really clearly indicates this is an agent,” said Liguori. “That’s where I see the value of MCP remaining … the ability to put policy and governance and registry and authentication in place, to be able to restrict what agents can do.”

This is a more precise description of MCP than generic interoperability language. The panel’s view was that MCP gives enterprises a place to identify agent activity and attach controls to it. In that framing, MCP is useful not only because it connects systems, but because it helps structure and govern access to them. That is a practical argument, and it fits the way enterprise adoption usually unfolds: governance first, scale after.

CLI-based agents do not eliminate the need for MCP

On the claim that CLI-based agents make MCP unnecessary, the panel did not reject CLI-based workflows. It argued that they fit different scenarios. “We ship APIs, we ship SDKs, and we ship CLIs … because we want to meet developers where they are and we want to meet them at the scenario that they’re working in,” said McCaffrey. Cooper put it more broadly: “there’s not going to be one protocol through them all.”

The point was that local and supervised developer workflows may work well with CLIs, especially where the user is present and the environment is tightly bound. But that does not remove the need for a protocol designed for repeatable, policy-aware access in enterprise and web environments. MCP, in this view, is not a universal answer. It is a durable answer for a specific and important class of interactions, including many cases where direct CLI access would be too open-ended from a governance or security standpoint.

Security is now a central design issue

“Security and authentication has been one of the most actively changing parts of the MCP specification of the past year,” said Cooper. That said, McCaffrey added that “no one protocol is going to solve all security challenges.”

Those two points together sketch a realistic picture of where MCP stands. First, the protocol is already changing in response to security concerns. Second, MCP is only one part of the answer. The maintainers described a broader setup that could include gateways, policy systems, registries, and identity controls around the protocol. They did not present MCP as a self-contained security framework. They presented it as one layer in a larger control architecture that now has to evolve alongside agent adoption.

Implementation quality is now a main issue

“The reality is, we all have different customers. We have different needs. And when we come together, we really learn in the best possible way what the industry needs as a whole, and from there, we incubate or work with standardization organizations or protocol developers like ourselves in order to make the right decision for the industry,” said Parra. “I think that’s what I’m really excited about.”

That shifts the focus from getting servers built to making sure that there is clear guidance on design and deployment, with a defined roadmap for the future. Broadly, the maintainers’ view was that the ecosystem now needs narrower scope, clearer evaluation, and interfaces designed for model use rather than simply exposing existing APIs in bulk. That is a different problem from the one MCP faced a year ago. The early phase rewarded speed and experimentation. The next phase depends more on clearer patterns, stronger guidance, and a better shared understanding of what a well-designed MCP server actually looks like. Cooper reinforced the point later in the session, saying the project now needs to “codify these best practices and practical things that people can take home and use.”

Building for the long haul

Building for the long haul

A broader point ran through the discussion: how to make sure MCP remains relevant and durable for years to come. Rapid adoption and growing enterprise maturity suggest the project is entering a second phase, one defined by refinement rather than emergence. “For the project and its governance itself, little has actually changed,” said Soria Parra, who added that MCP has retained its “very bottoms up” open-source character. At the same time, he said, the project’s connection to the foundation and its members is giving the maintainers more direct input from enterprise users and other real-world deployments — feedback that can be folded back into the protocol as it evolves.

Check out all the talks from this event at the AAIF YouTube channel.